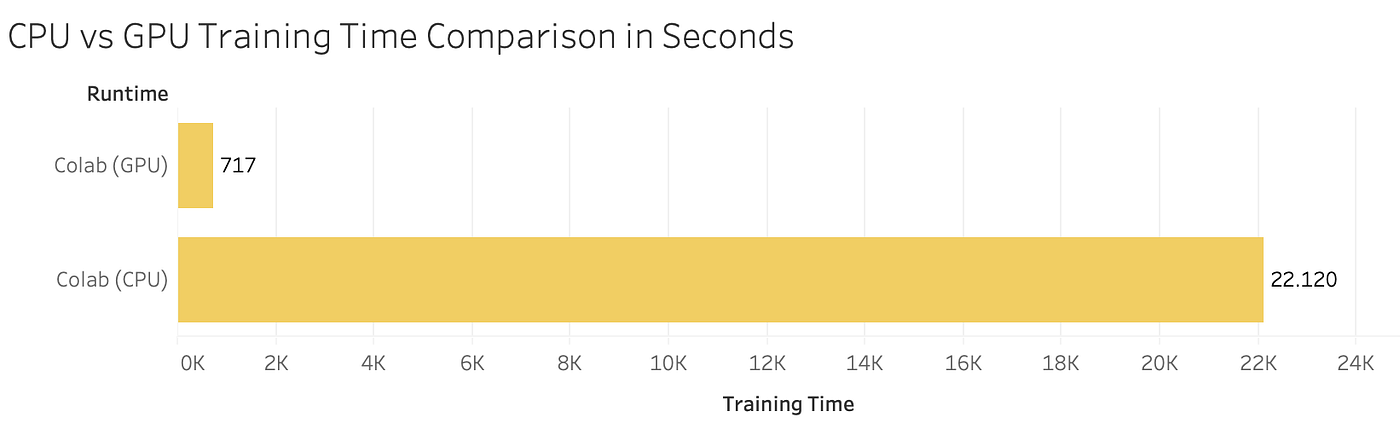

PyTorch: Switching to the GPU. How and Why to train models on the GPU… | by Dario Radečić | Towards Data Science

PyTorch: Switching to the GPU. How and Why to train models on the GPU… | by Dario Radečić | Towards Data Science

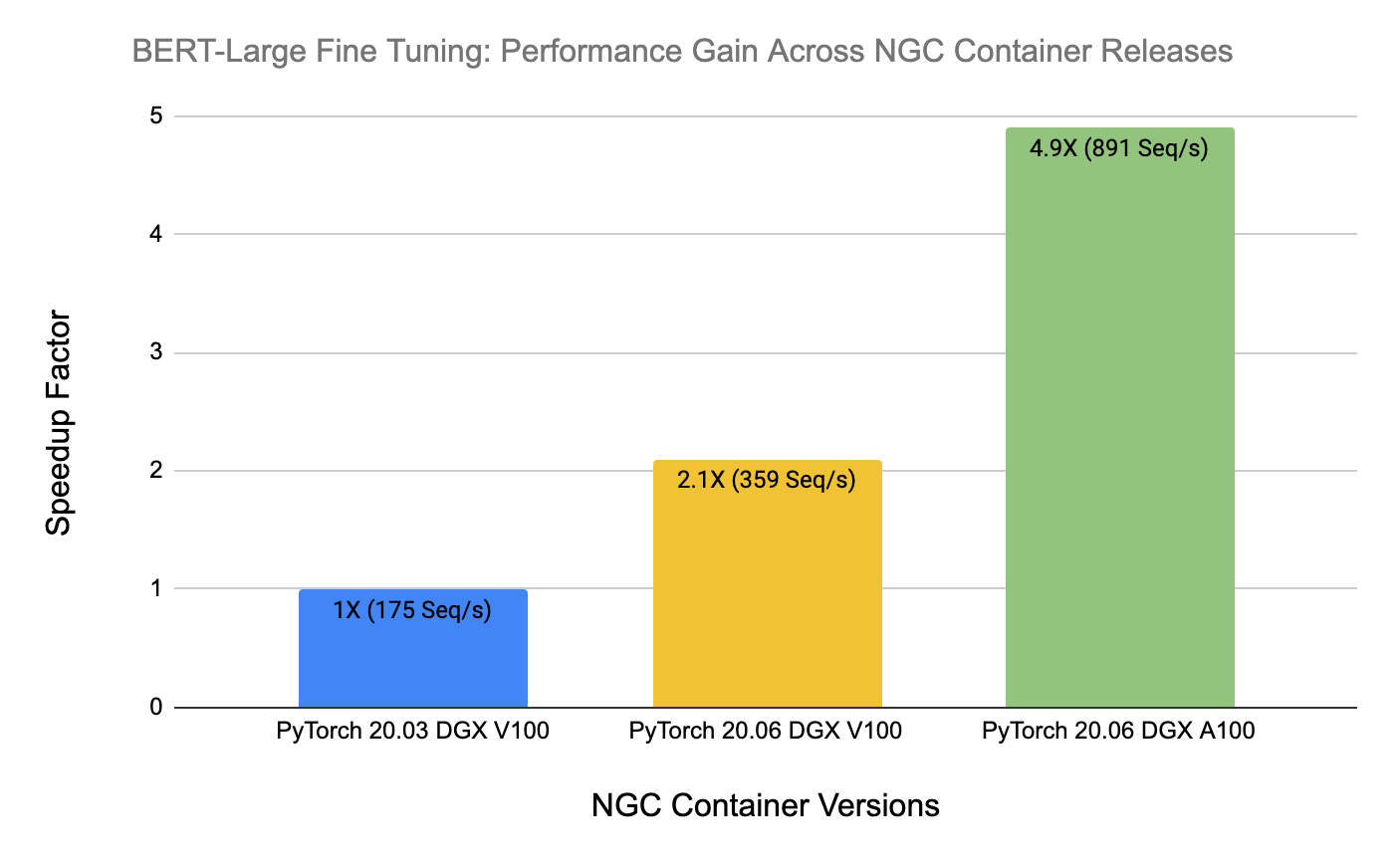

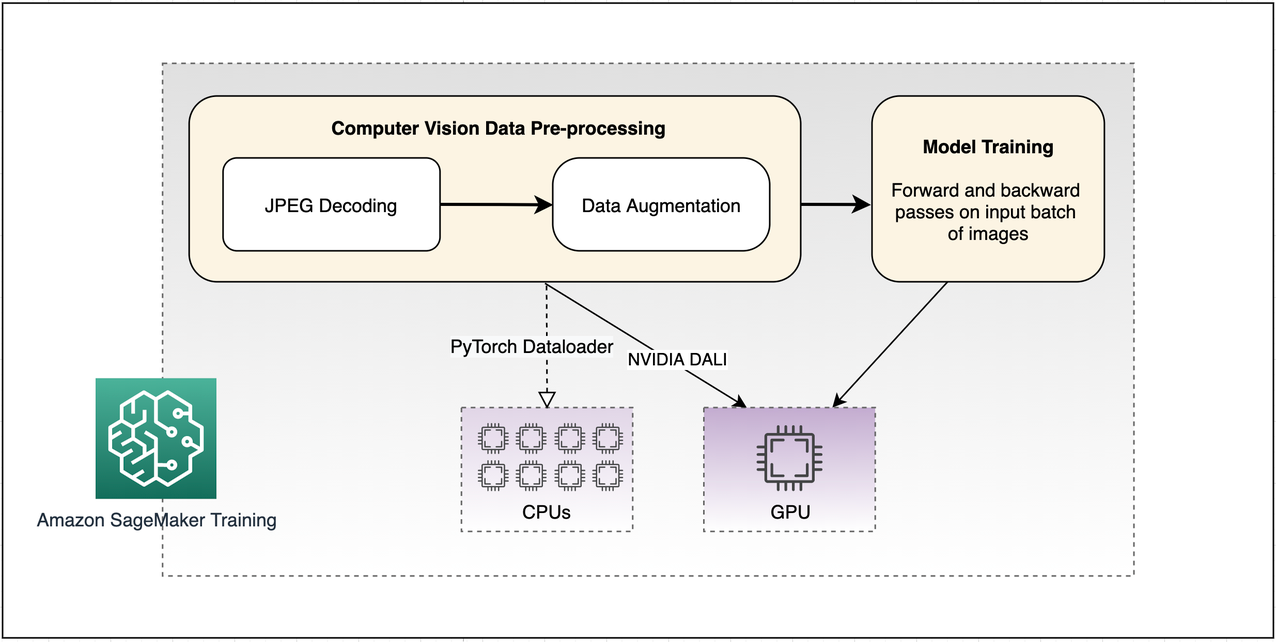

Accelerate computer vision training using GPU preprocessing with NVIDIA DALI on Amazon SageMaker | AWS Machine Learning Blog

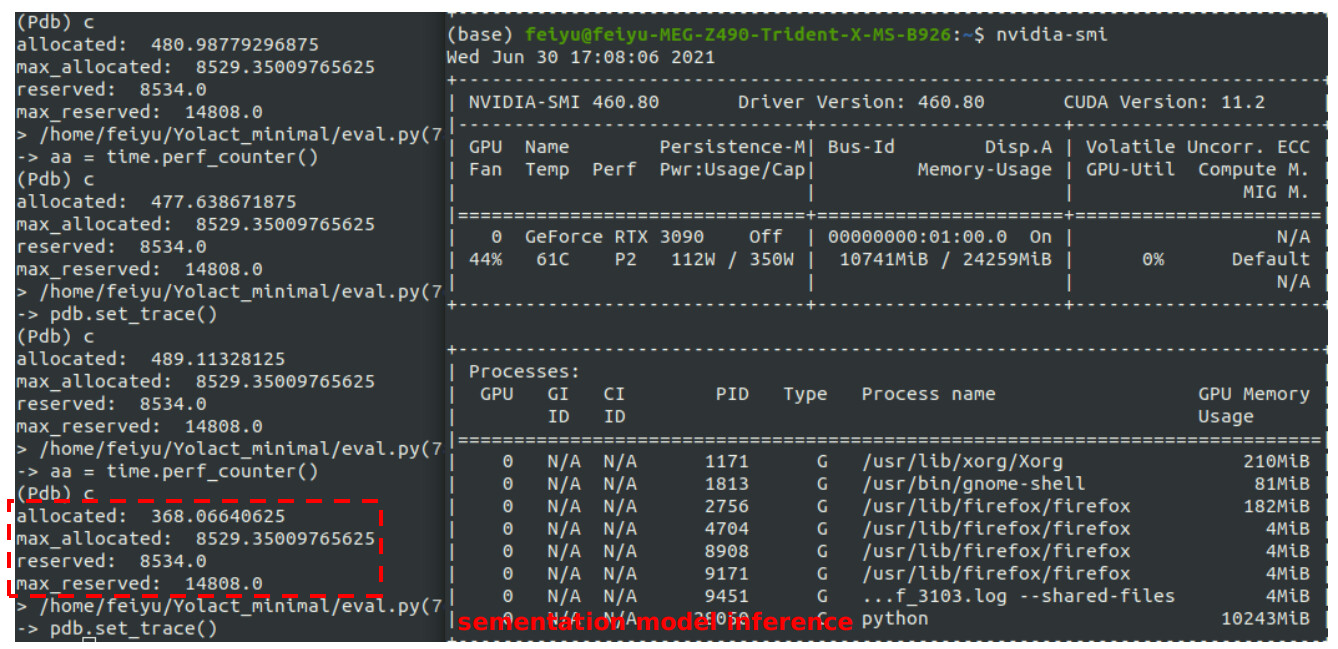

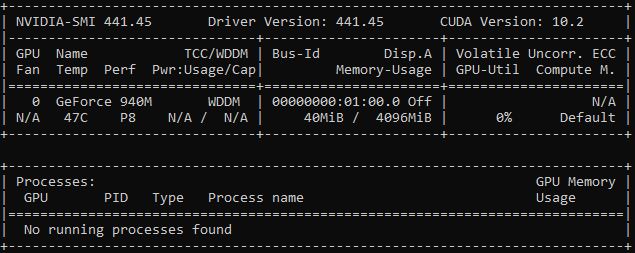

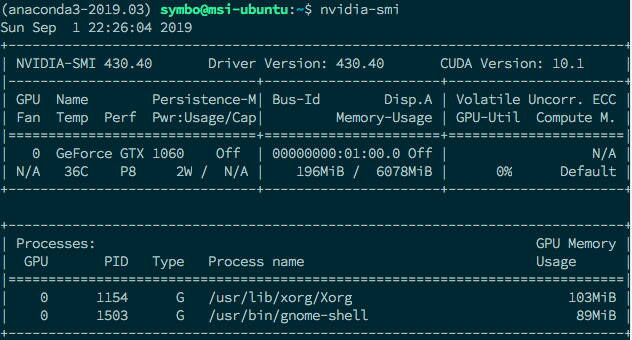

Use GPU in your PyTorch code. Recently I installed my gaming notebook… | by Marvin Wang, Min | AI³ | Theory, Practice, Business | Medium